Shard Postgres with one command

Apr 13th, 2026

Lev Kokotov

PgDog is on a mission to shard PostgreSQL. To make this work, it needs to be possible without downtime, and with little to no intervention from the user. On the road to that ambitious goal, we built the RESHARD command.

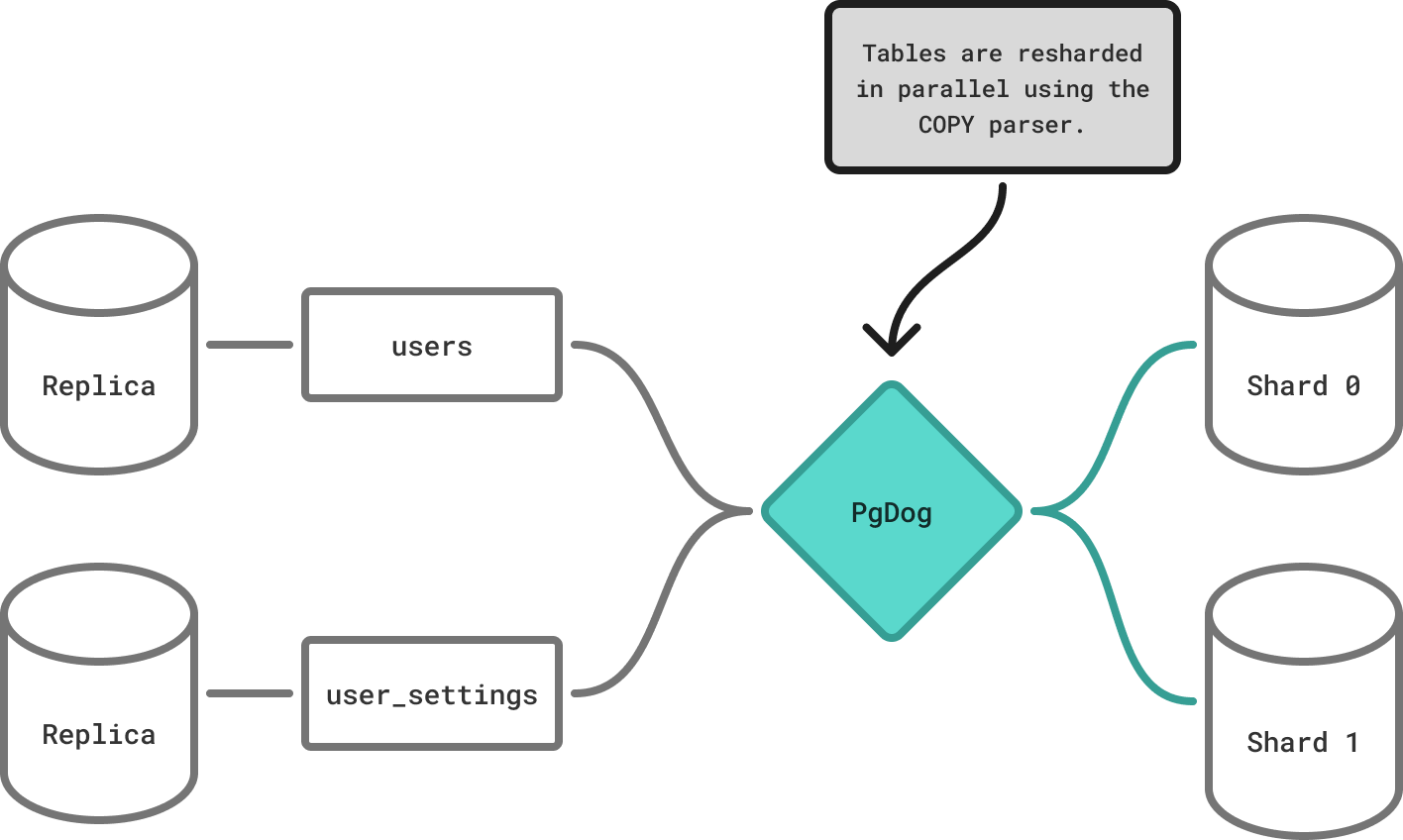

This command takes an existing PostgreSQL database cluster of N shards (including N = 1 for regular, unsharded databases) and splits it, using the COPY protocol and logical replication, between M new shards. Once the data is distributed, PgDog stops application queries, synchronizes the data on all shards, and atomically moves the query traffic to the new, now M-sharded database.

All these steps are handled by our open source code, which makes this process repeatable, verifiable and automatic.

How it works

If you’re running PgDog already, you might be familiar with the admin database. It’s a virtual, in-memory DB, for running custom, non-SQL commands that make PgDog do something, like reload its config, or show real-time statistics.

The RESHARD command is one of those commands, and takes the following arguments:

RESHARD [source] [destination] [publication]

| Argument | Description | Example |

|---|---|---|

source |

The name of your production database in pgdog.toml. |

prod |

destination |

The name of the new, currently empty, database in pgdog.toml, with a different number of shards. |

prod_2x |

publication |

The name of the PostgreSQL publication that encompasses the tables that need resharding. | pgdog |

Add new shards

To create a database with more shards, PgDog moves all database tables and data to a new cluster. Both databases need to be part of the same configuration in pgdog.toml, for example:

#

# Production DB

#

[[databases]]

name = "prod"

host = "prod.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

#

# New sharded DB

#

[[databases]]

name = "prod_2x"

host = "shard-0.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

shard = 0

[[databases]]

name = "prod_2x"

host = "shard-1.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

shard = 1

prod_2x are a set of empty PostgreSQL database servers, with no schema or data, and which can’t handle application traffic yet. See new databases for requirements, including user setup and multi-database considerations. Since PgDog can reload its configuration online, you can add / remove databases to/from the config without any impact on your application.

To reshard prod into prod_2x, you need to do the following:

- Create a publication for all tables in

prod, e.g.,CREATE PUBLICATION pgdog FOR ALL TABLES; - Run the reshard command:

RESHARD prod prod_2x pgdog;

And that’s it. PgDog takes care of everything else: schema synchronization, moving and resharding data online, and cutting over traffic once the two databases are identical. Your applications don’t need to change their DATABASE_URL, code, or even know this is happening. The rest of this post walks through each step in detail; for the complete reference, see the resharding docs.

Our strategy for resharding Postgres is not unique, but it differs from other sharded databases, like Cassandra or Elasticsearch, so it’s worth taking a quick detour to explain why we are re-creating the whole database from scratch.

The strategy

To shard a PostgreSQL database, PgDog copies all of its tables to a brand new set of databases. It does this using COPY, and streaming any rows that changed in the meantime via logical replication. This is done this way intentionally, and provides a few important benefits.

First, and most important: PgDog performs zero writes to the source database. This is a hard guarantee. We never, under any circumstances, modify your production data during resharding; we only read it, and copy it somewhere else.

PgDog is new, and we haven’t caught all the bugs yet. Never mutating data on the origin ensures it is impossible to corrupt it or break it in a way that can’t be quickly fixed. That on its own, is a good enough reason for us, but there is more.

Copying data is computationally inexpensive and can be offloaded to and parallelized with read replicas. So resharding, effectively, can be done without any impact on the production database. This is important, since sharding is usually done as a last resort, on an already overloaded database. You generally can’t, or really don’t want to, add more writes to production at that point.

PgDog offloads computationally expensive operations to the new cluster, making sure the production database can keep doing its job undisturbed.

Last but not least, moving data to a new cluster requires very little synchronization, compared to in-place resharding. We don’t need a complicated row tracker or esoteric rendezvous hashing to make sure rows are unique across all database servers.

We just take rows from cluster A, and move them to cluster B. Any required synchronization only happens during traffic cutover, and is almost entirely handled by existing primitives managed by PostgreSQL replication slots.

Sharding is not a novel science problem: it’s an operations problem. All we need is to re-use and improve existing tools to make it work.

Configure sharding

PgDog sharding, like all other features, is configurable in pgdog.toml. We currently support sharding by range, list, hash and schema. The latter is a special form of sharding that’s useful for strict, multi-tenant applications. We’ll cover that use case in a different post.

Choosing the right sharding function heavily depends on your use case.

Hash-based sharding is great for high cardinality columns, like user_id. It will distribute your users evenly between M shards and you don’t generally need to worry about hot spots or uneven load between shards.

List-based sharding is good for multi-tenant B2B apps, where tenant_id is countably small, e.g., more than 10 but less than 10,000. This also works if you want to physically isolate your customers on different machines, or have some special insight into how large or small some customers are. This function gives you the most control over where data lands in your sharded PostgreSQL cluster.

Range-based sharding is not used frequently, but can be applied to specialized systems, like geographic partitioning (e.g., city_id, state_id, etc.) Think of it as list-based sharding, but applied to columns with much higher cardinality.

PgDog reshards Postgres one table at a time. The sharding key column, therefore, must be present in all tables for it to work out of the box. We are working on a workaround for this, by querying for the sharding key via foreign key references, but that’s not quite ready yet.

Using hash-based sharding for this example, we can enable it on the new database cluster as follows:

# Shard all tables that have the `user_id` column.

[[sharded_tables]]

database = "prod_2x"

column = "user_id"

data_type = "bigint"

# Shard the `users` table using

# its primary key (`id`) as the sharding key.

[[sharded_tables]]

database = "prod_2x"

column = "id"

table = "users"

data_type = "bigint"

| Argument | Description |

|---|---|

database |

The name of the new, sharded database. In this example, that’s prod_2x. |

column |

The name of the sharding key column that’s present in all tables in the source database. |

table |

Scopes the config entry to only that table (and column). This is useful for handling tables that don’t follow a naming convention for the sharding key. |

data_type |

The data type of the sharding key. PgDog supports sharding bigint, varchar, and uuid. |

The function is applied to all PostgreSQL operations in the exact same way:

- Queries that filter on the sharding key column are routed to the correct shard. e.g.,

SELECT * FROM users WHERE id = $1 - Rows sent via the

COPYprotocol are split up between matching shards accordingly, e.g.,COPY users (id, email) FROM STDIN - Logical replication protocol messages are parsed and re-routed to the right shard based on the table they are targeting, and the sharding key column value

This uniform approach makes sharding reliable, reproducible, and testable. All data flows through the same part of our code base (the query router), which makes adding new functionality reasonably straightforward and all modifications relatively bug-free.

Synchronize schema

Before copying data, PgDog replicates all table definitions, custom types, extensions, and primary key constraints from prod to the new cluster, using pg_dump under the hood.

RESHARDhandles this step automatically. To run it manually or monitor progress, see schema synchronization.

Copy data

With the databases and the sharding function ready to go, we are ready to shard. The first step is to copy tables, while redistributing their rows, in-flight, to the new, sharded database.

RESHARDhandles this step automatically. To run it manually or monitor progress, see Move data.

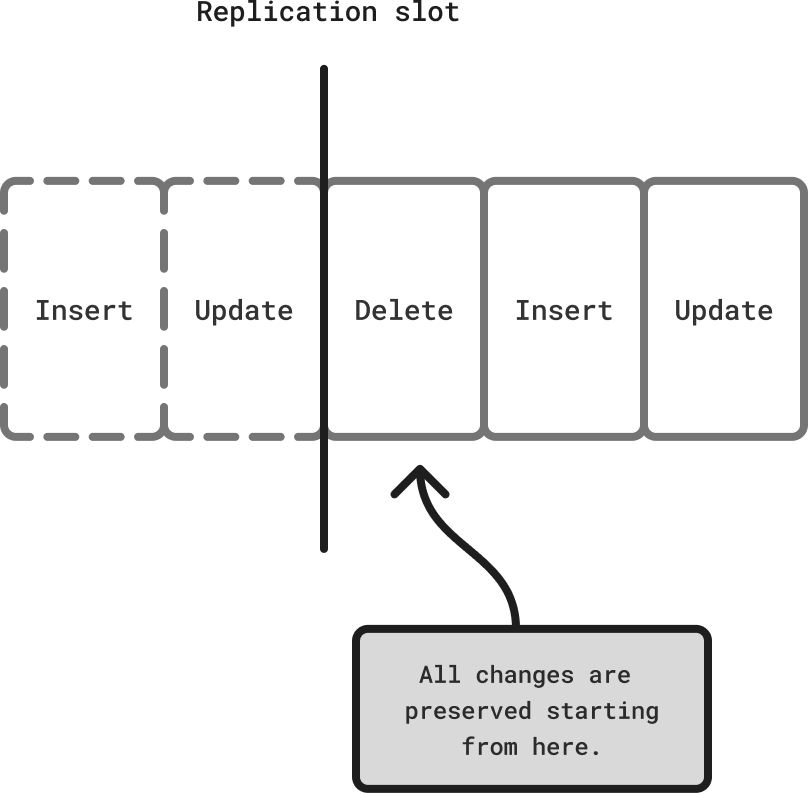

But before we can do this, we need to pin our position in the write-ahead log (WAL). That position, called the LSN, tells us which row changes happened after the copy started and need to be replicated once it finishes.

This is done using PostgreSQL replication slots and the algorithm we use is taken directly from the CREATE SUBSCRIPTION command, with a few tweaks of our own.

Replication slot

The RESHARD command creates a replication slot on all shards in the origin database. For unsharded (N = 1) clusters, that’s just the primary. For resharding N into M (with N > 1), this will create N replication slots, one for each origin shard.

Since we plan to use logical replication to stream row changes, we need a special connection to Postgres that uses the streaming replication protocol. This is the same mechanism that’s used by read replicas, is well documented and has several production-grade implementations, which we read before writing our own.

While the table copy is running, the replication slot(s) are left idle, forcing PostgreSQL to accumulate its write-ahead log files on disk.

Disk space: While the table copy is running, the replication slot keeps PostgreSQL from recycling its WAL, so it accumulates on disk until resharding completes. To estimate how much space you need, check how much WAL your database writes per second. Most managed providers, including RDS and Aurora, expose this metric. Multiply that rate by the expected copy duration and add a comfortable buffer. On RDS or Aurora, a few extra terabytes is cheap insurance.

We are working on a feature to offload the WAL streaming and storage to S3. This will make this operation pretty much unnoticeable, but that feature isn’t ready yet.

Copy tables

With our position in the write-ahead log locked, we can start copying and resharding tables. For each table in the publication, PgDog will run a separate COPY [table] TO STDOUT command, parse the rows, and send them via COPY [table] FROM STDIN to the new shards.

The copy protocol is already handled in our query router, so it reuses the same code to parse and route rows.

To make this faster, we use the binary copy protocol. Instead of serializing each row into human-readable text, it sends the bytes as they are stored on disk, without any pre/post processing. This speeds up the copy by almost 30%; we measured it and it’s really noticeable.

Even with the binary protocol, copying tables can take a while. Normally, this is bottlenecked by disk writes on the destination database, but since PgDog splits the rows between multiple shards, the write capacity is multiplied by M. That makes resharding actually throttled by the read capacity of the source database. To make it faster, PgDog takes advantage of read replicas and can copy tables in parallel.

Replica parallelization

Since PostgreSQL 16, we are able to create logical replication subscriptions to streaming read replicas. This feature, seemingly unrelated, opens the door to a cool use case: we can read different data from different replicas, at the same time, while maintaining a synchronization point between each read operation.

If you’re on AWS, or any other managed Postgres host that separates storage and compute by using networked disks, you can create read replicas really quickly. Resharding-only replicas can use bigger machines and faster disks (e.g., io2 or io3), allowing PgDog to read and copy data even faster.

To make sure your app doesn’t use these replicas for regular queries, we support flagging them with resharding_only = true in pgdog.toml:

# Production database.

[[databases]]

name = "prod"

host = "prod.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

#

# Resharding-only replicas.

#

[[databases]]

name = "prod"

host = "prod-resharding-replica-1.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

resharding_only = true

[[databases]]

name = "prod"

host = "prod-resharding-replica-2.a3iddibb9a0q.us-west-2.rds.amazonaws.com"

resharding_only = true

PgDog will execute one COPY command per replica database and table. We benchmarked this in RDS with 4 replicas and 4 shards and managed to move data at 1 GB/second. If you have, say, a 1 TB database, we could reshard it in about 16 minutes. That’s a bit of a game changer, but I’m of course a little biased.

The underlying implementation is worth a brief detour. Postgres implements ACID compliance using MVCC (multi-version concurrency control), so at any given moment, there are multiple versions of the same table visible to different clients. This property allows applications to have a consistent and immutable view into the database while executing multiple queries inside the same transaction.

We take advantage of this property and can atomically copy tables from one database to another using just a few Postgres commands:

| Command | Description |

|---|---|

BEGIN READ COMMITTED |

Start a transaction, creating a virtual version of some database table. |

CREATE_REPLICATION_SLOT users_copy TEMPORARY |

Create a temporary replication slot, which gives us the current position in the write-ahead log (LSN). Replication slots, if specified, can follow transaction semantics. |

COPY users TO STDOUT (format BINARY) |

Copy this version of the table to the destination shards. |

COMMIT |

Close the transaction, removing the temporary replication slot. |

Since the LSN is a monotonically increasing integer, when the time comes for streaming row changes, we can ignore any updates that took place before the recorded position in the write-ahead log. This guarantees there are no duplicate rows on the new shards.

To be absolutely sure, before starting the resharding process, we validate that all tables in the publication have a primary key constraint. Logical replication streams entire rows, so if we somehow received two identical copies of the same row, the destination shard would raise a unique constraint error.

More interestingly, this constraint allows us to replace logical inserts with upserts (ON CONFLICT ([primary key]) DO UPDATE). Using upserts allows us to “rewind” and re-apply the replication stream, at our discretion, a powerful tool in our toolkit.

An idempotent replication stream also allows us to stream omnisharded tables (tables with identical data on all shards), which are mutated on different shards simultaneously, without relying on complex external synchronization primitives.

A solid foundation (that’s PostgreSQL, of course) makes for a flexible and powerful architecture.

Monitoring

Even with all of our optimizations, copying tables can take a while. You can monitor this process in the admin database by executing commands against the same admin DB used for resharding. You’ll get a real-time view into which tables are copied, and how many rows (and bytes) have been copied so far, along with other useful information:

| Command | Description |

|---|---|

SHOW REPLICATION_SLOTS |

Show replication slots created on each shard in the origin cluster, with their status and LSN. |

SHOW SCHEMA_SYNC |

Show which commands PgDog is running to copy the table schemas from origin cluster to the destination. This is useful when monitoring secondary index creation that can take a while. |

SHOW TABLE_COPIES |

Show which tables are being copied and resharded, with real-time statistics. |

Replicate rows

Once all the tables are copied to the M-sharded database, PgDog will start reading row changes from the replication slot we created in the first step. The Postgres replication protocol is well documented, machine-readable, and provides us with all the necessary metadata we need to correctly route the row change to the right shard:

- The table name

- The entire row update

- The LSN of the transaction that created the row version

Since we recorded the LSN for each table, we can safely ignore any row versions we already have. For all new row versions, we convert the replication message into a regular Postgres query, and push the change to its corresponding shard:

| Message | Query |

|---|---|

Begin |

BEGIN |

Insert |

INSERT INTO [table] ([columns]) VALUES ([values]) ON CONFLICT [primary key] DO UPDATE SET [columns / values] |

Update |

UPDATE [table] SET [columns / values] WHERE [primary key] |

Delete |

DELETE FROM [table] WHERE [primary key] |

Commit |

COMMIT |

Just like regular queries, all row changes respect transaction semantics.

Rewriting replication protocol messages into queries has two notable benefits. First, we can arbitrarily fan-out these queries to any shard(s), without them having to connect to PgDog using the replication protocol. That would have been very hard to get right, otherwise.

The second benefit is we can re-use our query router code to stream and route logical replication changes, just like we do for normal queries. Writing and maintaining less code is actually better (sorry, Claude).

“Draining” the replication slot will take a bit of time, because it accumulated quite a few changes while the table copies were running. You can track progress by running SHOW REPLICATION_SLOTS in the admin database. The lag column shows how many bytes remain. Once it hits zero, PgDog will move application traffic to the new shards automatically, with effectively zero downtime.

Cutover

Once the replication slot changes are replicated (and resharded), the two databases become almost identical: data in production is evenly split between the new shards, and PgDog is replicating transactions in real-time. The replication lag between the two databases is less than a few milliseconds.

However, in order to move query traffic to the new cluster, the replication lag has to come down to zero. The only way to do this is to stop all write queries from reaching the production database.

RESHARDhandles this step automatically. To trigger it manually, configure thresholds, or understand rollback options, see traffic cutover.

While this sounds like it would cause errors and downtime, the entire operation takes less than 1 second. To the app, this feels like the database suddenly became slow and unresponsive. Unless your app has a really short API request timeout, this will cause it to just wait.

Once the lag is zero, PgDog updates its own configuration and swaps the two databases: prod_2x becomes prod and prod becomes prod_2x. All other settings, including the database shards, remain the same.

Before resuming traffic, PgDog creates a logical replication connection from the new shards to the old production database. This allows you to rollback this entire operation in case something goes wrong, without losing any data. Since the writes are paused, setting up the “reverse replication” as we call it, is immediate and requires no additional steps or waiting for data to synchronize.

With the reverse replication running, PgDog resumes query traffic, with paused and new queries now flowing to the new, sharded database.

And that’s it.

When things go wrong

Resharding is a complex, multi-step operation and not everything will go smoothly every time. A table might be missing a primary key, pg_dump versions might not match, WAL can fill up disk mid-copy, or a cutover can time out under high write load. In most cases, the most reliable fix is to start over: drop the destination databases, re-create them from scratch, and run RESHARD again. Since PgDog never writes to the source database, restarting is always safe.

The one thing worth knowing before you do: drop the replication slot on the source before retrying. An orphaned slot will keep accumulating WAL indefinitely and can eventually cause the source database to stop accepting writes.

If you run into something that doesn’t resolve with a retry, get in touch. Edge cases are exactly what we want to hear about.

Closing thoughts

True automation is a hard target and we’re not quite there yet. Even with resharding done almost entirely by PgDog, there is a lot of preparation that needs to be done before running the RESHARD command will result in a successful operation 100% of the time.

If you’re thinking about sharding your database, get in touch. Most of this code is already running in production with our customers. We’re always looking for more deployments and edge cases. On a somewhat related note, we have an exciting funding update to share soon. PgDog is going to be around for a long, long time.